-

"With static sites, we've come full circle, like exhausted poets who have travelled the world trying every form of poetry and realizing that the haiku is enough to see most of us through our tragedies." A line that particularly resonates in this lovely Craig Mod article on the solace of programming for yourself.

-

"One of the biggest challenges for code based artists is figuring out how to interface with traditional workflows. How can we export images, videos at resolutions and formats that work. In addition, we are building tools as we build our art. This is both a gift and a curse. It’s a gift in that we can often do things that are hard or impossible with traditional tools, but also a curse in that the tool building part of our work can be really time consuming. Imagine if every time you went to cook a meal you also had to construct the pots and pans for cooking."

Zach Lieberman on recent work on editorial imagery, built in code.

-

Enjoyed this write-up from Tom MacWright, if only because I spend a lot of my time writing Rails, still, even in 2020-1. It's nice to be reminded by somebody thoughtful, but coming from outside, that yes, there's still a lot to like in your part of the world, that it's not an ideological dead-end. And yes, that the _culture_ around the Ruby ecosystem really is, by and large, a good one. Sure, we don't have strong typing (well, we kinda do now), but we do have lots of great _practice_ around testing, and writing code in the first place. Not having IntelliSense™ is sometimes an advantage. Also, having wrapped a four-month Ruby contract recently, it's just such a nice language to write – and to *think* in.

(I'm with Tom on the whiffiness of all versions of the asset pipeline / webpacker / whatever it is we're doing this week.)

-

"This site contains the original source code for Elite on the BBC Micro, with every single line documented and (for the most part) explained."

Assembly is, it turns out, dark, dark magic. This is a very impressive thing to pore over – like Lions' Guide, but for Elite.

-

Aha: a course for people who can program already! Good; Python is my Umpteenth language and I can just about bodge it together, but it'd be nice to know it better. Might hammer at this over some evenings.

-

"…he doesn’t strike me as someone that Hubertus Bigend would hire. He strikes me as somebody that Hubertus Bigend would trick an opponent or enemy into hiring.”

-

"Folders is a language where the program is encoded into a directory structure. All files within are ignored, as are the names of the folders. Commands and expressions are encoded by the pattern of folders within folders… Folders is a Windows language. In Windows, folders are entirely free in terms of disk space! For proof, create say 352,449 folders and get properties on it."

Endless, recursive, screaming.

-

Loving Mohit Bhoite's circuit sculptures. where resistors, LEDs and brass rods take on structural elements within the circuits to beautiful effect. Just gorgeous.

-

"COBOL is often a source of amusement for programmers because it is seen as old, verbose, clunky, and difficult to maintain. And it’s often the case that people making the jokes have never actually written any COBOL. We plan to give them a chance: COBOL can now be used to write code for Cloudflare’s serverless platform Workers."

Not an April Fool; instead, a deep dive for newcomers to COBOL, a platform to make it on, and some movie trivia. Great blogging all around.

planetary, a sequencer.

01 March 2020

I wrote a music sequencer of sorts yesterday. It’s called planetary.

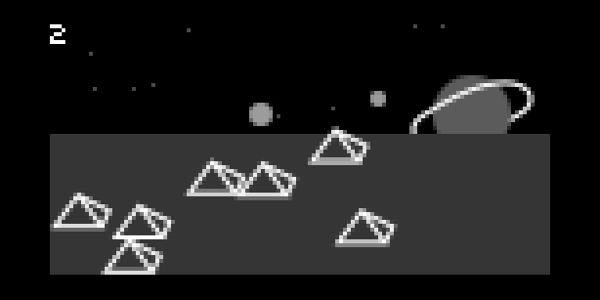

It looks like this:

and more importantly, it sounds and works like this:

In short: it’s a sequencer that is deliberately designed to work a bit like Michel Gondry’s video for Star Guitar:

I love that video.

I’m primarily writing this to have somewhere to point at to explain what’s going on, what it’s running on, and what I did or didn’t make.

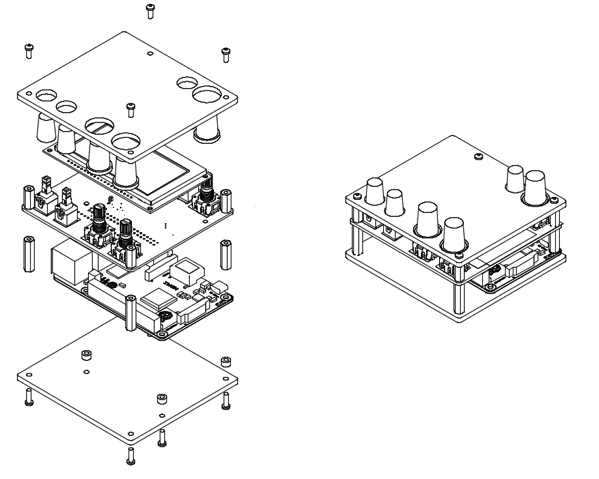

It runs on a device called norns.

Norns

norns is a “sound computer” made by musical instrument manufacturer monome. It’s designed as a self-contained device for making instruments. It can process incoming audio, emit sounds, and talk to other interfaces over MIDI or other USB protocols. A retail norns has a rechargeable battery in it, making it completely portable.

There’s also a DIY ‘shield’ available, which is what you see in the above video. This is a small board that connects directly to a standard Raspberry Pi 3, and runs exactly the same system as the ‘full-fat’ norns. (A retail norns has a Raspberry Pi Compute Module in it). You download a disk image with the full OS on, and off you go. (The DIY version has no battery, and mini-jack I?O, but that’s the only real difference. Still, the cased thing is a thing of beauty.).

norns is not just hardware: it’s a full platform encompassing software as well.

norns instruments are made out of one or two software components: a script, written in Lua, and an engine, written in SuperCollider. Many scripts can all use the same engine. In general, a handful of people write engines for the platform; most users are writing scripts to interface with existing engines. Scripts are certainly designed to be accessible to all users; SuperCollider is a little more of a specialised tool.

Think of a script as a combination of UI processing (from both knobs/encoders on the device, and incoming MIDI-and-similar messages), and also instructions to give to an engine under the hood. An engine, by contrast, resembles a synthesizer, sampler, or audio effect that has no user interface – just API hooks for the script to talk to.

The scripting API is simple and expressive. It lets you do things you’d need to do musically, providing support both for screen graphics but also arithmetic, scale quantisation, and supporting a number of free-running metro objects that can equally be metronomes or animation timers. It’s a lovely set of constraints to work against.

There’s also a lower-level thing built into norns called softcut which is a “multi-voice sample playback and recording system” The best way to imagine softcut is as a pair of pieces of magnetic tape, a bit over five minutes long, and then six ‘heads’ that can record, playback, and move all over the tape freely, as well as choosing sections to loop, and all playing at different rates. As a programmer, you interface with softcut via its Lua API. softcut can make samplers, or delays, or sample players, or combinations of the above, and it can be used alongside an engine. (Scripts can only use one engine; softcut is not an engine, and so is available everywhere.)

norns even serves as its own development environment: you can connect to it over wifi and interface with maiden, which is a small IDE and package manager built into it. The API docs are even stored on the device, should you need to edit without an internet connection.

In general, that’s as low level as you go: writing Lua, perhaps writing a bit of Supercollider, and gluing the lot together.

Writing for norns is a highly iterative and exploratory process: you write some code, listen to what’s going on, and tweak.

That is norns. I did none of this; this is all the work of monome and their collaborators who pieced it together, and this is what you get out of the box.

All I did was build my own DIY version from the official shield, and create some laser-cut panels for it:

…which I promptly gave away online.

Given all that: what is planetary?

Planetary

To encourage people to start scripting, Brian – who runs monome – set up a regular gathering where everybody would write a script in response to a prompt, and perhaps some initial code.

For the first circle, the brief gave three samples, a set user interface, and a description of what should be enabled:

create an interactive drone machine with three different sound worlds

- three samples are provided

- no USB controllers, no audio input, no engines

- map

- E1 volume

- E2 brightness

- E3 density

- K2 evolve

- K3 change worlds

- visual: a different representation for each world

build a drone by locating and layering loops from the provided samples. tune playback rates and filters to discover new territory.

parameters are subject to interpretation. “brightness” could mean filter cutoff, but perhaps something else. “density” could mean the balance of volumes of voices, but perhaps something else. “evolve” could mean a subtle change, but perhaps something else.

I thought it’d be fun to take a crack at this, and see what everyone else was up to.

A thing I’ve found in my brief scripting of norns prior to now is how important the screen can be. I frequently think about sound, but find myself drawn to how it should be implemented or appear, or how the controls should interact with it.

So I started thinking about the ‘world’ as more than just an image or visualisation, but perhaps a more involved part of the user interface, and then I thought about Star Guitar, and realised that was what I wanted to make. The instrument would let you assemble drones with a degree of rhythmic sample manipulation, and the UI would look like a landscape travelling past.

The other constraint: I wanted to write in an afternoon. Nothing too precious, too complex.

I ended up writing the visuals first. They are nothing fancy: simple box, line, and circle declarations, written fairly crudely.

As they came to life, I kept iterating and tweaking until I had three worlds, and the beginnings of control over them.

Then, I wired up softcut: the three audio files were split between the two buffers, and I set three playheads to read from points corresponding to each file. Pushing “evolve” would both reseed the positions of objects in the world, and change the start point of the sample. Each world would run individually and simultaneously, and worlds 2 and 3 would start with no volume and get faded in.

The worlds also run at different tick-rates, too: the fastest is daytime, with 40 ticks-per-second; space runs at 30tps, and night runs at 20tps.

I hooked up the time of day to filter cutoff, tuned the filter resonance for taste, and then mainly set to work fixing bugs and finding good “starting points” for all the sounds.. On the way, I also added a slight perspective tweak – objects in the foreground moving faster than objects at the rear – which added some nice arrhythmic influence to the potentially highly regular sounds.

In the end, planetary is about 300 lines of code, of which half is graphics. The rest is UI and softcut-wrangling – there’s no DSP of my own in there.

I was pleased, by the end, with how playable it is. It takes a little preparation to play it well, and also some trust in randomness (or is that luck?. You can largely mitigate that randomness by listening to what’s happening and thinking about what to do next.

Playing planetary you can pick out a lead, add some texture, and then pull that texture to the foreground, increasing its density to add some jitter, before evolving another world and bringing that forward. It’s enjoyable to play with, and I find that as I play it, I both listen to the sounds and look at the worlds it generates, which feels like a success.

I think it meets the brief, too. It’s not quite a traditional drone, but I have had times where I have managed to dial in a set of patterns that I have left running for a good half hour without change, and I think that will do.

planetary only took a short afternoon, too, which is a good length of time to spend on things these days. I’ve certainly played with it a good deal after I stopped coding. It’s certainly encouraged me to play with softcut a bit more in the next project I work on, and perhaps to keep trying simple, single-purpose sound toys, rather than grand apps, on the platform.

Anyhow – I hope that clarifies both what I did, and what the platform it sits on does for you. I’m looking forward to making more things with norns, and as ever, the monome-supported community continues to be a lovely place to hang out and make music.

-

Greatly enjoyed eevee's history of CSS and browser-based code; particularly, I enjoyed the moment where you're following along with things you knew… and then you viscerally go "oh, _here's_ where I began!" I twinged as I remembered where I began, my move away from table-based layout… and then the point where I started battling quirks mode for a living…

-

"Everything is Someone is a book about objects, technology, humans, and everything in-between. It is composed of seven “future fables” for children and adults, which move from the present into a future in which “being” and “thinking” are activities not only for humans. Absorbing and thought-provoking, this collection explores the point where technology and philosophy meet, seen through the eyes of kids, vacuum cleaners, factories and mountains.

From a man that wants to become a table, to the first vacuum cleaner that bought another vacuum cleaner, all the way to a mountain that became the president of a nation, each story brings the reader into a different perspective, extrapolating how some of the technologies we are developing today, will bur the line between, us, devices, and natural beings too."

Simone has a book out!

-

"And, when you free programming from the requirement to be general and professional and SCALABLE, it becomes a different activity altogether, just as cooking at home is really nothing like cooking in a commercial kitchen. I can report to you: not only is this different activity rewarding in almost exactly the same way that cooking for someone you love is rewarding, there’s another feeling, one that persists as you use the app together."

This is great. I am always bewildered by the direct equivalence of learning-to-code and learning-to-make-money. Instead, learning to cook for yourself – just well enough for you and the few people who need you – is a nice metaphor, as is cookery itself.

Also: oh for something like "Hypercard for iOS", and oh for an end to code-signing and developer accounts and professionalisation-with-no-meaningful-alternative.